Photoaconpan (Duplicate): Duplicate Identifier Metrics

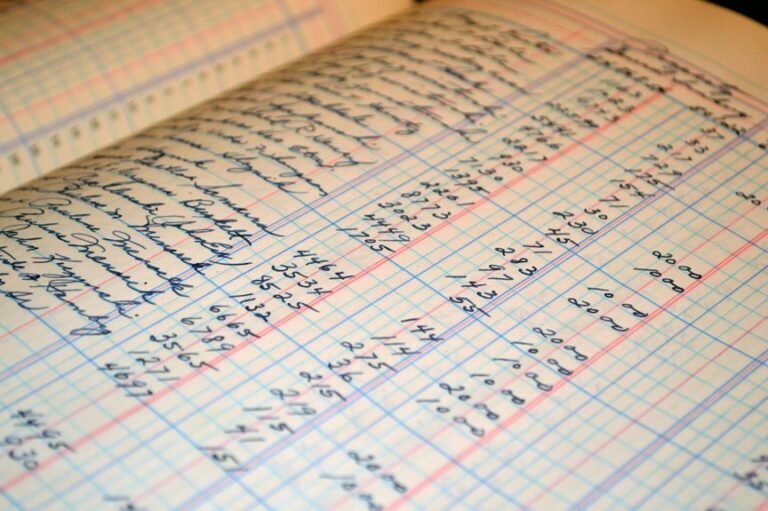

The management of duplicate identifiers is a critical aspect of data integrity. Organizations face challenges in identifying and resolving duplicate entries, which can compromise data accuracy. By utilizing specific metrics, businesses can streamline their data management processes. Understanding these metrics is essential for minimizing redundancy. However, the implications of effective duplicate detection extend beyond mere accuracy, raising questions about the broader impact on decision-making and operational efficiency.

Understanding Duplicate Identifiers

Understanding duplicate identifiers is essential for maintaining data integrity, as they can lead to significant discrepancies in data analysis and reporting.

Various identifier types may contribute to duplication, complicating data management. Effective duplicate detection strategies are vital for organizations to ensure accurate datasets, fostering informed decision-making.

Addressing duplicates minimizes errors and enhances the reliability of insights drawn from analytical processes, ultimately supporting operational freedom.

Metrics for Identifying Duplicates

To effectively identify duplicates within datasets, organizations must rely on a range of specific metrics that facilitate detection.

Key metrics include similarity scores, threshold values, and pattern recognition techniques. These tools enhance duplicate detection accuracy and support identifier validation processes.

Benefits of Using Duplicate Identifier Metrics

While organizations may vary in their specific needs, the benefits of using duplicate identifier metrics are universally significant.

These metrics enhance data accuracy by identifying and resolving duplicates, leading to more reliable information.

Furthermore, improved efficiency arises as streamlined processes reduce redundancy, allowing teams to allocate resources more effectively.

Ultimately, adopting these metrics supports informed decision-making and fosters organizational agility.

Conclusion

In conclusion, embracing duplicate identifier metrics is akin to polishing a diamond; it reveals the true brilliance of data integrity. By systematically identifying and resolving duplicates, organizations not only enhance the accuracy of their datasets but also streamline their decision-making processes. This proactive approach minimizes redundancy and operational inefficiencies, fostering a culture of agility and precision. Ultimately, the effective use of these metrics empowers organizations to navigate complexities with confidence, ensuring that insights drawn from data are both reliable and actionable.